Connect any AI Software to

Models Provided by

+

0

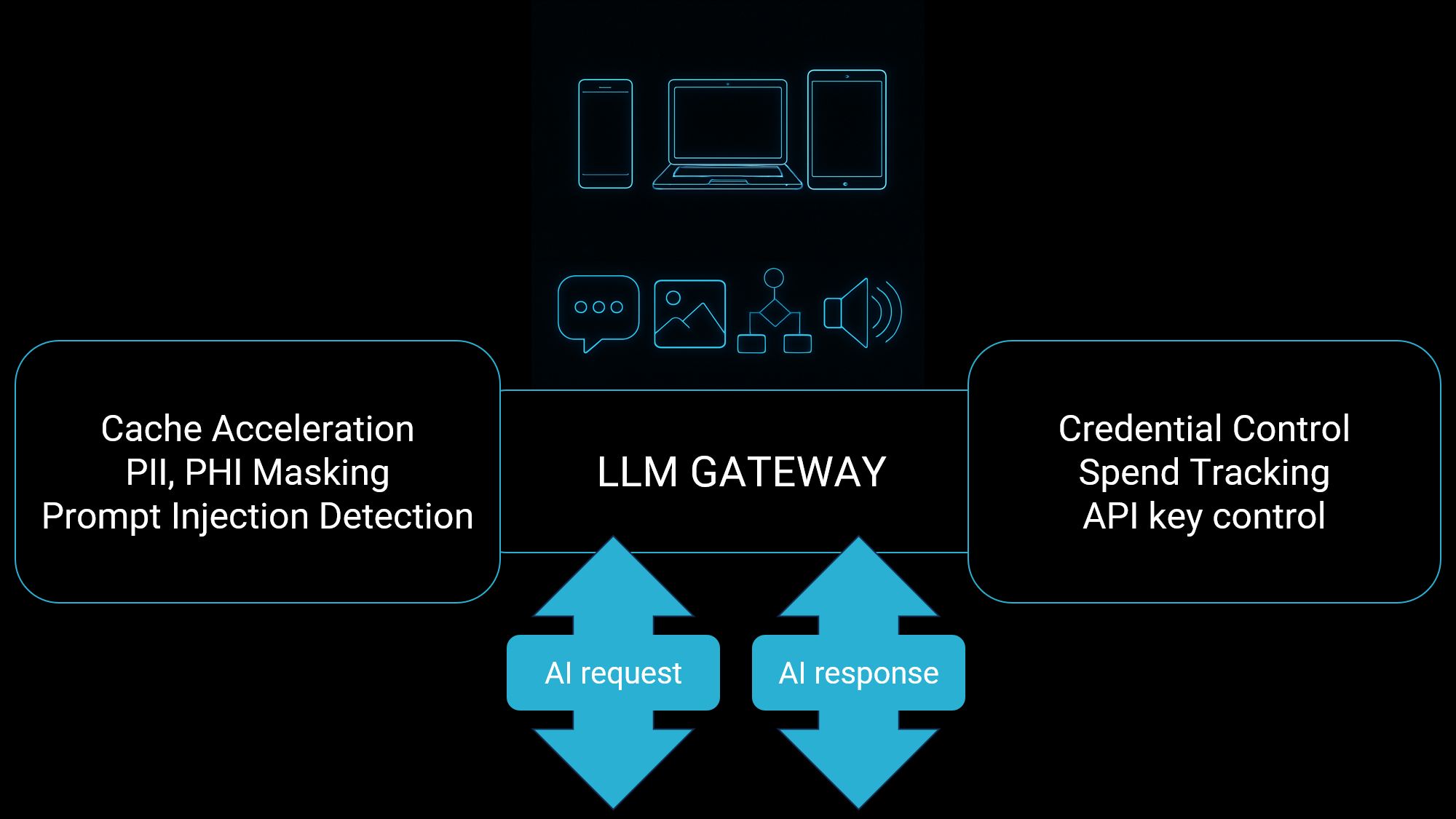

Protect your confidential data

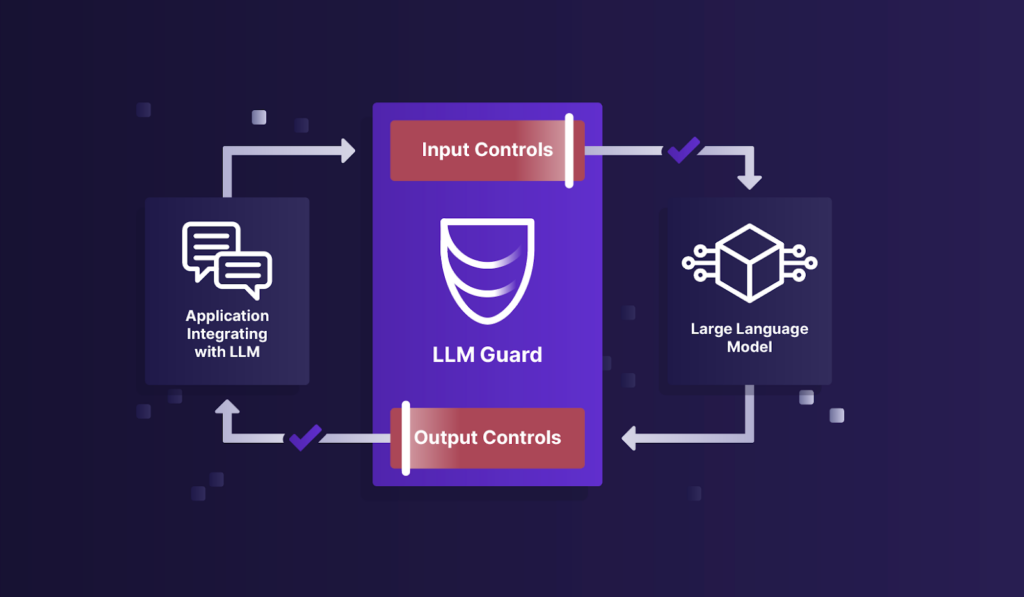

Secure your LLM

- Sanitization of Input/Output: Prevents data leakage and ensures safe interaction with LLMs.

- Detection of Harmful Language: Scans both input and output to detect bias, toxicity, or inappropriate content.

- Protection Against Prompt Injection: Defends the integrity of LLMs by filtering malicious prompts.

- Privacy Safeguards: Anonymizes sensitive data to protect user privacy.

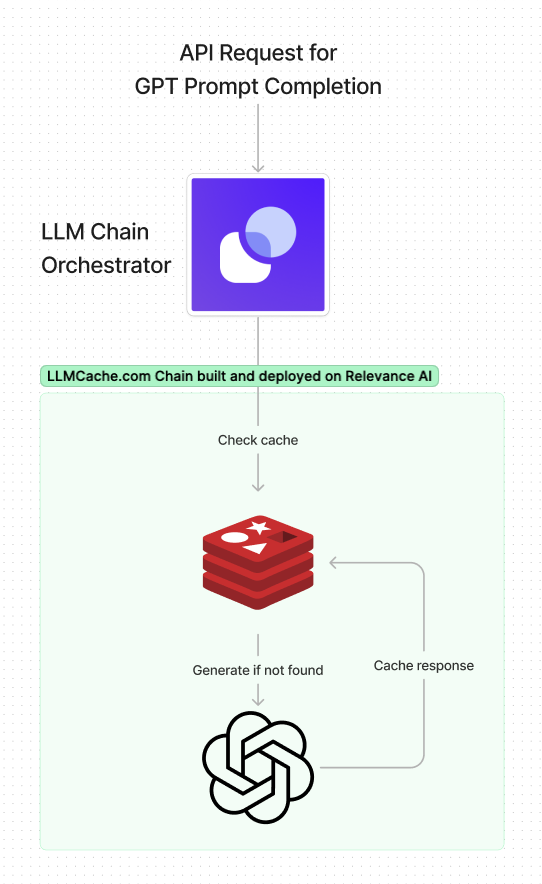

Accelerate your responses

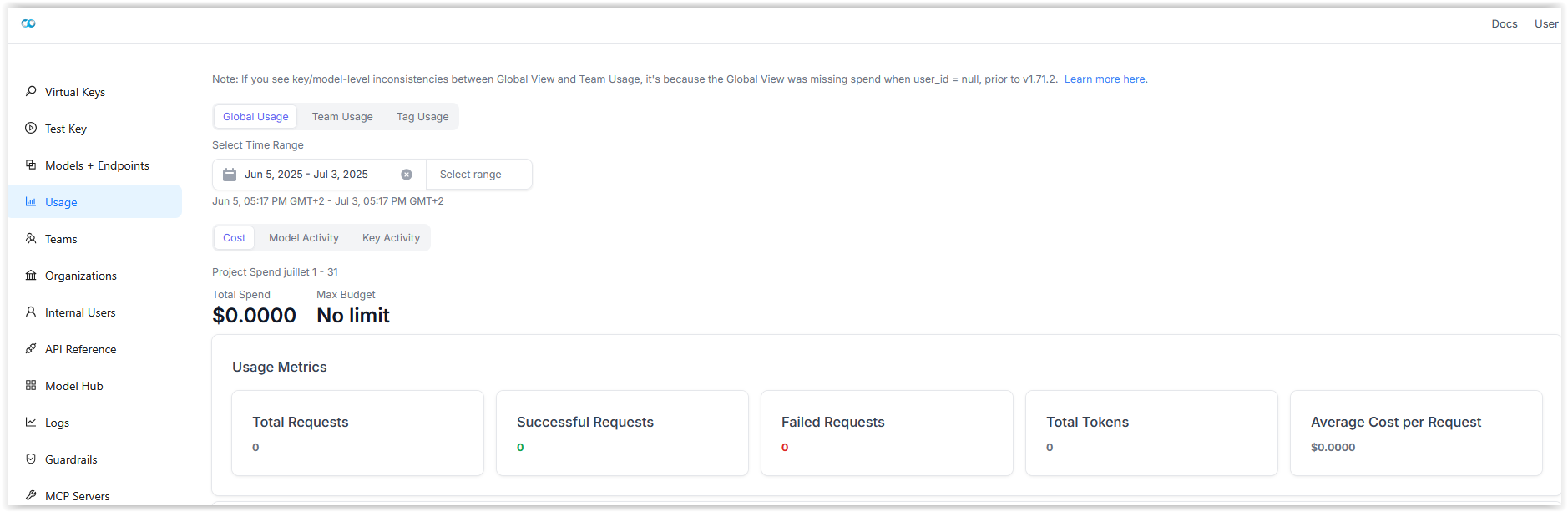

Centralize your cost

/BLOG/